About Me ⌄

I’ve worked in a wide variety of very public roles and written a number of books. In my “real life” I’ve had an audience varying from hundreds of thousands to millions over the years, across big media, online media, and academic media.

Teaching

Some of you may also know me from the classroom, as I’ve taught at a decent array of major universities, in topic areas from linguistics to anthropology to sociology to cultural studies and media. I am not currently teaching.

Companies and Brands

If you’re wondering if I’m the “same Aron Hsiao that…” then, in fact, I probably am. I won’t mention all of the companies, brands, and publications here because many of them won’t want to be directly associated with a blog like this one.

On Google

But if you’ve searched Google for “Aron Hsiao” then you’ve found me. The writer me, the professor me, the photographer me, the technology expert me, and so on. All of those pages and pages of results are, in fact, me. I am not aware of any other Aron Hsiao that has recently (in a decade or more) ranked in the first dozen-plus pages of Google’s results.

Born February 29th, 1976

Ph.D. Sociology (The New School, 2014)

M.A. Social Science (Chicago, 2004)

B.A. Anthropology (Utah, 2001)

B.A. English (Utah, 2001)

7 Books

Thousands of articles

1 Life

2 Kids

2 Goldfish

2 Cats

Lived in Salt Lake City, New York City, Los Angeles, Chicago, Portland, and now… Provo.

Myers-Briggs INFP/INTP

I started “blogging” for the first time in 1999 at twenty-three years old, as I was going through my first serious breakup. Without meaning to, I continued to blog on a personal basis more or less without interruption after that. Now it’s been going on seventeen years. All of that content (well, most of it) is here, in one place.

In professional life, I have also ended up spending a decent amount of time blogging for an income for others. Still do.

But after all these years, Leapdragon remains home.

Many have questioned the wisdom of maintaining a site like this one, and from 2007 through 2015 I kept it increasingly obscure online. I have grown tired, however, of hiding myself behind a “professional” cardboard cutout. I’m forty years old and my life, like the lives of many others, gets more complicated by the day, personally and professionally.

It’s time to just be me again, in public, and let the chips fall where they may. So here I am.

Politics: Mixed—Old Left + Old Right (Fuck the SJWs)

Music: Sonic Youth, Einstürzende Neubauten

Novel: 2666, Roberto Bolaño

Operating Systems: Mac OS, Linux (Android)

Aquarium Fish: Common goldfish, fully grown

Illumination Technology: Neon tubing

Rag: Counterpunch

Academic Work: Illuminations, Walter Benjamin

Work of Art: Boulevard of Broken Dreams, Helnwein

Art Medium: Still photography

Club/Pub: The Pub, Ida Noyes Hall, University of Chicago

City: New York City

Place: Antelope Island, Syracuse, Utah

Fabrication Material: Leather

Drink: Green Chartreuse

Beach: Ellwood Beach, Goleta, California

Design Language: Swiss/Modern/Bauhaus

Season: Fall

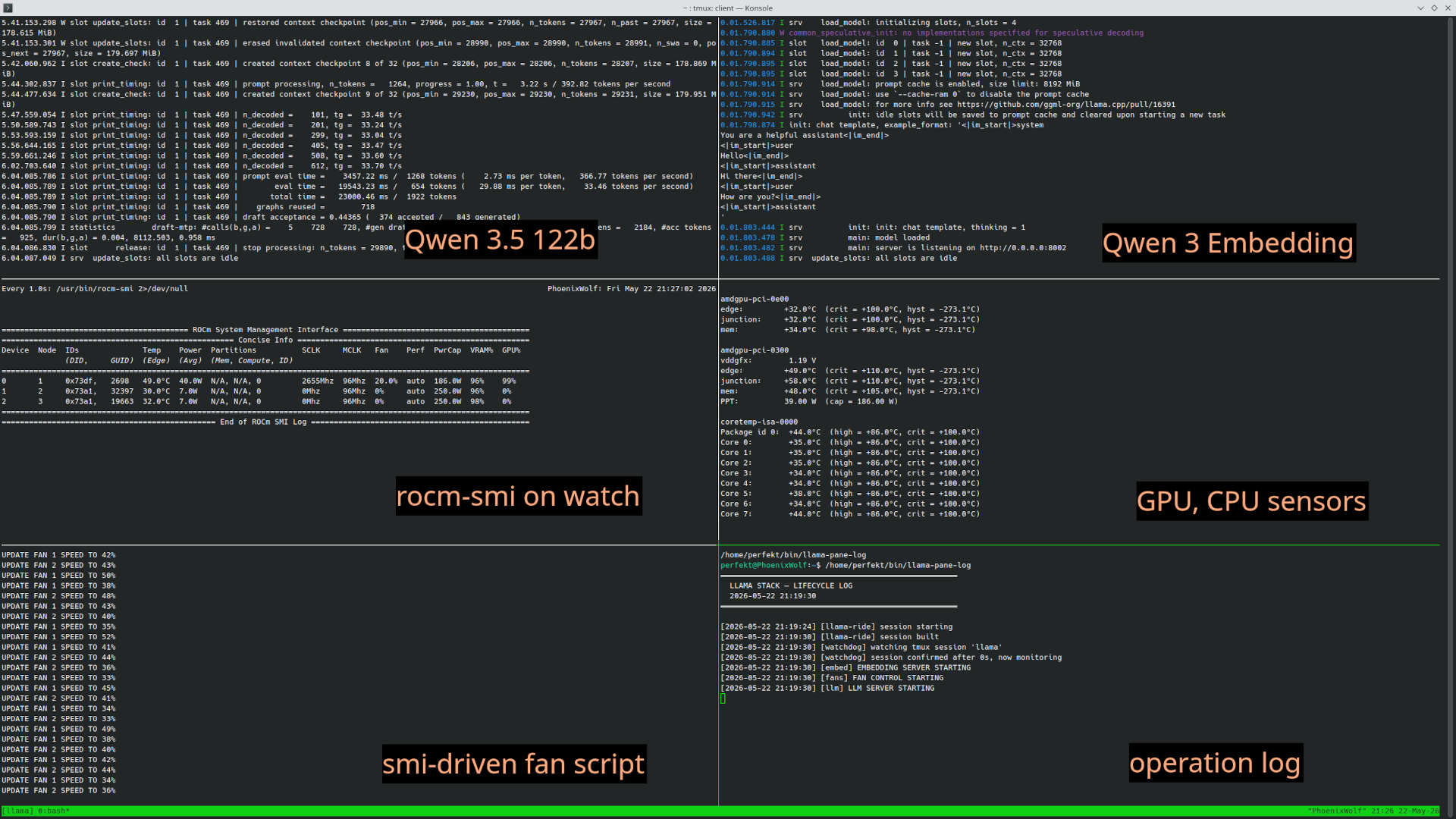

So someone was asking if I ran LM Studio locally and I said no, and they said Ollama and I said no, llama.cpp directly, and they were like isn’t that pretty bare bones and hard to use and keep up? So I promised a screen grab and a bit of exposition. Rather than send it by email though, I’m just gonna post it for you. So, here we go.

First thing, I run a multi-system setup. I have two headless ThinkCentre m93p tiny machines for the actual agents. Each one is a Core i5 with 16GB RAM and 230GB SSD. They run headless and each one is about the size of three old CD jewel cases stacked on top of each other, so two m93p systems is still… almost no space at all. Right now, one is OpenClaw and one is running multiple coding harnesses. Having each of them in their own metal gives more safety re: access to the local environment without me having to futz around with virtualization.

I interact with the claw mostly on Discord and the others Tailscale SSH.

Inference actually runs on the machine I interactively use, which is a 3-GPU setup with 76GB VRAM total along with a Core i9-9660k and 128GB DDR4. Two of them are Radeon Pro data center cards, it requires a bit of hacking and home engineering to use these safely as they don’t have onboard active cooling. 3D printer is your friend here. I run actually a fairly stable model selection, almost always Qwen 3.5 122B on the GPUs plus Qwen 3 Embedding in CPU just for memory and vector search stuff.

I then have a service set up with systemctl that launches a bunch of scripts that are bunged into a tmux session. Each script is lightly watchdogged so that if it goes down, the crash is logged and it comes back up and of course if the whole mess goes down systemd brings the service back up from scratch. So the tmux session is available on boot if I choose to attach to it, and of course if I’m remote (say, with Discord on a laptop) I can still open a shell, ssh in, and attach to the tmux session to monitor if I want. It looks like this:

Point being, I treat both inference and embedding just as an OS service that’s always running in the background on different ports, I don’t really treat it like an app. The claw agent is also just a service, it’s always running on its headless machine, it hasn’t been offline since Feb. except to run updates (always an adventure with OpenClaw). The other machine with the coding harnesses is closer to “app” use as those really aren’t doing anything unless I log in to start/use them. But for the most part, when I said “mine is more like infrastructure” that what I meant. I don’t really play with models much. I did early on, but it quickly became clear that Qwen 122B is the hot target for balancing performance and quality for any VRAM quanity from 64GB to probably four times that. Nothing else really comes close.

Right now I’m running ROCm from the lemonade repository. At first I was compiling from AMD myself but that was a PITA to track releases and with stuff happening so fast, I’m happy to let someone else build for me so now I just run lemonade’s llama as they track the nightlies and get all of the latest merges and the build is stable and performant. The v620s aren’t the fastest things on earth for this role as they were clearly intended for partitioned/multi-tenant graphics workloads and lack P2P BUT they’re cheap as dirt and have 32GB VRAM each, so if you’re okay with 35 tokens/sec chat and 50-60 tokens/sec code, they’re a way to get to a 122B model locally without spending what it costs to buy a car.

Oh, I forgot, I also route the whole thing through LiteLLM, which runs on the same machine as the inference, in order to get a working version of /v1/responses/ rather than /v1/completions/ as responses caching behavior is more sane, and that’s important at 122B so that you don’t have to re-run the entire prefill each turn, as that would be painful, especially with claw-based agents as they run with like 50k context at the first turn (which is also why nobody wants to run them on paid tokens). Completions is supposed to be okay at this, but practice says it’s not, responses is better.

All of this said, there are also more changes coming. There is an X399 board and a Threadripper waiting to replace the i9-9900k momentarily so that even without P2P we at least get x8/x16 to CPU independently for each card, rather than x4 shared amongst the entire PCIe pool. I also have another v620 to add (for 108GB VRAM total, which will let me move from q4 to q6 on the 122B model and open up to the full 256k context). There will probably be two rounds of changes here. The 9900k -> Threadripper will be relatively easy on some upcoming Saturday. The third v620 is a bit harder, as I have to figure out how to power it. 😀 I have a 1200W PSU now and a fat case, but I may need a 1500W when all is said and done. I definitely will need more connectors than I currently have. Each GPU is 2x 12v connectors and of course the mainboard wants more, too, post switch. So… the problem isn’t the high tech stuff, as always these days the complexity is ironically in good old fashioned wires and amps.

But anyway, that’s all to-do.

Hope that’s helpful!

So the state of things in local inference on my non-sleek non-Strix Halo non-Mac Studio build is that I’ve settled on:

-

The dual Radeon v620s running the chat and orchestrator model, which right now is Qwen 3.6 35B A3B

-

The empty space on the RX 6700xt being used for local embedding model, which right now is qwen3-embedding

The reason for this is that basically the 6700xt is slower than the v620s, and we’re using layer splits (more on this in a moment) which means that when part of the pipeline runs through the 6700xt, it slows down the output. In fact, including the 6700xt loses me about 10t/s in inference speed while in practice only adding about 6-8MB to the inference VRAM pool (since it’s a 12GB card that’s also driving three displays).

With that said, I’ve continued to play with ROCm and try to learn WTF is going on, becasue:

-

ROCm works with a wider variety of components

ROCm holds out the promise of tensor parallelism (more on this in a moment as well)

And coming back to ROCm a few weeks later, with more experience under my belt, I’m better able to intuitively grok what’s happening and then confirm my suspicions as I go. So here’s what I’ve learned.

— § —

First, ROCm is about 30% faster that Vulkan on my hardware (dual v620s on PCI x16 that’s topologically just run through the Z390 chipset). That’s not nothing. So if possible, ROCm is to be preferred.

Second, ROCm is actually verrry ragged when it comes to stability. Things I started to suspect that I then was able to confirm with web searches (that LLMs didn’t proactively provide to me when I was trying to solve the ROCm problem before):

-

ROCm doesn’t want any card in the pool to run beyond about 85% VRAM usage as shown by rocm-smi. If you pass 85% you’re into crashy territory and if you hit 90% a crash is almost certainly imminent.

-

ROCm really isn’t built for llama.cpp or layer splits; it’s designed for tensor parallelism with a high degree of symmetry. Almost any tensor split value for layer splits other than 1:1 (i.e. split evenly across all cards) will cause big stability problems. As in, crashes every 2-3 prompts.

-

In general, ROCm also doesn’t really love MoE models, you’ll still get some crashiness even if everything else is perfect in most cases, but if you can solve everything else, it’ll be reduced, i.e. every few dozen prompts. This can be helped by running llama-server under a watchdog so that upon crash it comes back up and we continue seamlessly, just with a slower response for that turn.

What I once thought mattered that probably actually didn’t matter:

-

Being extraordinarily picky and suspicious about PCI-e address space under linux; letting Linux map it with 4G+ and BAR enabled is likely enough to address the cards.

-

ROCm versions actually don’t seem to matter that much, gfx1030 is pretty well supported.

-

All kinds of tweaks and environment variables that cause LLMs to repeatedly say “Aha, I found it! You need to…” but then don’t solve the problem.

Basically the two sins I was committing was:

-

Trying to squeeze the nicest quant and biggest context I could into the VRAM pool, i.e if I had 85% full I was like “Oh I can get a bigger quant, I still have 10GB free! (Nope, doesn’t work that way.)

-

Trying to run 3 cards in splits and trying to tune those splits so that all cards would fill up at the same time. (Miracle it ever worked at all while I was trying that.)

So while before I was trying to run Qwen 3.5 35B A3B at Q8_XL, and trying to tune –tensor-split so that all those % used counters matched exactly, now I’m strictly at 1:1 and I’ve had enough runs to see that if I set to anything other than 1:1 we’re essentially guaranteed to crash within the first three turns.

— § —

What still doesn’t work?

Sadly, vllm. It should be possible to run vllm with tensor splits, which would theoretically give me better multi-context and a better /responses/ API, but there was a regression in the most recent versions that causes it to punt unless you can stand up P2P between the cards.

And, just as importantly, P2P between the cards. Two things on that point:

-

I now understand that I have, fundamentally, the wrong CPU/mainboard architecture for this, because the Intel platforms at this price point only have 16 lanes to the CPU from PCI-e and only one slot runs direct; the rest of the x16 slots run through the chipset and are essentialy x4 under the hood. So for a while, I was considering swapping out the Z390 and Core i9-9900k for an AMD Threadripper setup, though I think I’ve backed off of that. Threadripper gives each slot dedicated lines to CPU. It also enables P2P between cards.

-

Happily (and unhappily), before I pulled the trigger, I learned that the v620 / Radion Pro “Navi” cards were really for data center fractional gaming provisioning, and not machine learning workloads, and thus they actually lack the hardware for P2P anyway. Not the end of the world, especially when you consider the value of the price/performance, here—I was able to put together 64GB of VRAM with compute and memory bandwidth that’s like double the speed of a 6700XT, and all for like $500 in cash. That’s a tremendously good deal, even if it won’t reach the same performance level as true machine learning / inference hardware.

Note that there may still be some benefit to Threadripper, even without P2P, as the dedicated x16 lines to CPU for the two cards have far more bandwidth than the x4 lines shared amongst the entire chipset-attached PCI-e bus (i.e. almost everything in the system that isn’t the 6700XT). However:

-

I’m not sure exactly how much benefit there will be to making that round trip happen on a true, unshared x16 pipe vs. an x4 pipe, so it’s hard to measure value or ROI.

-

The cheapo Threadripper on the market (i.e. X399/TR4, last generation) is only PCI-e 3.0 which has half the bandwidth of PCI-e 4.0. So I’m not that inclined to shell out for PCI-e 3.0 for undetermined benefits, but I’m also not inclined to shell out for 4.0 at a much higher price for, still, undetermined benefits. So we wait.

— § —

So that’s the state of things. If I had it all to do again, what would I do differently?

-

Get on Threadripper at the last rebuild (when I moved from an i7-3770k to the i9-9900k and to the Z390 chipset). I was tempted, but I stuck with Intel for the faster single-thread interactive (web, photos, etc.). Who knew that a few months later LLMs would hit the mainstream? But in any case, the AMD platform is obviously better for local inference; Intel consumer is hobbled.

-

Consider a different family of retired server hardware (Insight or similar) on eBay. The AMD data center hardware is still the right move; it’s dirt cheap and readily available if you’re willing to do a bit of hacking. However, for inference, having more modern hardware with faster compute and higher bandwidth is offset by the ability to run P2P with tensor parallelism on slower, cheaper cards. So there’s no reason, if you’re doing multi-card, not to go for the slower, cheaper, older hardware, which, since you’re able to run P2P with parallelism, will end up at the same speed as a couple of v620s that can’t.

-

Not bother replacing the old RX480 with a 6700XT, since the RX480 could also have run an embedding model and it proved not to be practical or worthwhile to bother with adding the 6700XT to the pool. From the outside before this all started I was thinking, in part with help from LLMs, that it would be good to have three cards that were the same compute architecture (Navi / gfx103x) and the 6700XT with 12GB would add yet a few more GB to the pool. In practice, the LLMs were exactly wrong; there is basically no advantage to the 6700XT and adding it to the pool makes things either slower or less stable or both.

-

Not listening to LLMs so much or using them for search so much. My real unlock came when I started to Google search and skip past AI results. AI has a lot of opinions about AI, but they’re all wrong. Even when you ask it to do web search. Better just to hang out in the repos and on Github and read the interactions.

And finally, for anyone looking to run v620s on Linux for inference, my kernel command line is:

pci=realloc,earlydump amdgpu.gpu_recovery=1 amdgpu.noretry=1 amdgpu.ras_enable=0 amdgpu.mcbp=0 iommu=pt intel_iommu=on pci=big_root_window pcie_aspm=off amdgpu.runpm=0 pcie_port_pm=off amdttm.pages_limit=16777216 ttm.pages_limit=16777216 amdttm.page_pool_size=1048576 ttm.page_pool_size=1048576 amdgpu.gartsize=4096

Pair this with BIOS settings that enable addressing beyond 4GB and that enable BAR and VT-d/IOMMU and they’ll get seen. Crazy to remember that I spent the first day just trying to get the cards to (first off) post, and then after that, (next) be seen by the Linux kernel.

I’ve learned a lot. Not sure how transferrable it is, but it’s nice to be in a space where the smoke has cleared.

— § —

Bonus note:

I actually can run Qwen 3.5 122B A10B well on the two v620s at (say) Unsloth UD_IQ3 and I like its output a lot, better than Qwen 3.5 A3B at Q6_XL. So if you’re wanting to run a “big” model like that (at least, big for home office purposes), it’s totally doable. I get about 27 tokens/sec on inference, which is quite respectable. I have to do it with Vulkan, though, where I can push the memory use right to “full”; on ROCm we just don’t have enough space given that ideally we need to stay below 80-85% use for stability purposes, and I don’t want to go more compressed than Q3.

Thing is, Qwen 3.6 35B A3B at Q6_XL with ROCm delivers ~55 tokens/sec, no MTP. Twice as fast. It’s really, really hard to sit and be patient for 122B when 35B is twice as fast and still… acceptable. So that’s where I am now. But if you’re wanting to run 122B or similar biggish MoE, UD_IQ3 and 27 tokens/sec is pretty damned good.

There’s this discourse, which picked up a bunch during the COVID years, about how the most essential workers in our society earn the least, and people then debate the value of the CEO or the elite white collar tech worker.

I’ve never been an EMT or a grocery store clerk, but there are a decent number of other things that I’ve been, in some splits that are maybe not common. For example, I’ve been both an author and a professor, each for a number of years. I had to stop doing both of these things because, with very few exceptions, it’s simply not possible to earn a living doing them. They don’t pay a living wage. In some cases, they don’t pay even half a living wage.

The advances for my books were each on the order of $1,500 to $3,000 for trade nonfiction, and with the total sales life of a trade nonfiction book being a year or two and the total sales if you’re lucky being numbered in the tens of thousands if you really do well, all you had to do is write 50 books a year to make a living.

Similar story in academic life. You’re expected, as a matter of course, to publish. A lot. One paper can be as difficult as, if not more difficult than, writing an entire nonfiction trade book. And yet in academics, the pay for publishing is… zero. Zilch. Nada. You get the dollars for the classroom side of things. Which, for the only tenure track offer I ever got, was $30,000 per year for a 5/5 (basically, you spend your entire waking life either in the classroom or awake at home at 3:00 am grading homework), with the chance to earn as much as $48-$50 if I made tenure in a decade by somehow publishing a ton.

I stopped doing these things because there is literally no way to make a living doing them for most people. They’re pastimes for the already wealthy.

And yet, they are objectively and subjectively the most consequential things I’ve ever done, or will ever do. I still hear from students who say I changed the trajectory of their lives, and that my classes were the most informative that they ever took. And I still hear from people who have read my books. Some of them are the only book on a given subject, and are in the Claude Anthropic settlement (i.e. what the AI knows about that topic… it learned from me).

The things that I’ve done since then have no lasting importance. My first book was in 1997 and is still of import today. Meanwhile, the stuff I do now is generally obsolete and discarded within 3-6 months at most, and only a handful of people will ever see it, and it holds no particular importance for humanity. Yet it pays multiples of anything I could ever have earned writing or teaching.

There’s a sort of econ 101 logic or boilerplate analysis about this that says that people like fulfilling work, ergo there’s a surplus of labor for it, and thus it pays nothing. No, it’s not about demand alone because in fact there has been captive demand, say, in higher education, with infinite government subsidy, for a good long time. But all of those dollars went to administrators and executives of various kinds, and basically none of it went to the instructors.

Similarly, the Claude Anthropic settlement lists 500,000 works (seven of mine among them) that were used to train the AI. The estimated market value of Anthropic at the moment is around $380 billion. If get get really conservative and say that these 500,000 books are only 5% of its value (which I think is a ridiculously small number, given the fact that people expect AIs to give authoritative answers), and that the training and knowlege are only 25% of the value of the AI (also ridiculously low, but just for effect), then that’s just short of $10,000 of value per work, or $70,000 in value for my works. And of course Gemini and OpenAI were both trained in similar corpuses and are worth significantly more, so if we just lowball it and say they have the same value, that’s like $200k in value.

So it’s not that academic work or writing work isn’t in demand. Just like it’s not as though EMTs or grocery store clerks aren’t in demand. I know, this is kindergarten stuff. Back to supply again. People are just willing to do it for less.

The thing that dime store econ can’t really tackle is the philosophical problem here, something that seems a defect in our society. We leave this things that really matter, and that are very in demand, to just be compensated on a supply/demand curve, so that people really can’t make much of a living doing them (or in the case of academic work, or authorship, or teaching grade school for that matter), can’t make a living doing them at all. So what you get is high turnover and uneven quality.

I guess the thing I’m getting at is that there is a gap in the demand world, and it matches the enshittification of everything else. See, the demand isn’t for books, it’s for accurate, useful books. It’s not for academics, it’s for inspiring, mentoring academics who are legitimate experts. The demand isn’t for kindergarten teachers, it’s for good kindergarten teachers. This is the part that the econ books tend to gloss over, because it’s inconvenient.

The public doesn’t hire all of these functions. Book buyers don’t get to hire book writers, and parents don’t get to hire teachers. And this is where the moral problem comes. Because over and over again, the public is frustrated. Why are the experts wrong? Why does my grade school suck shit?

It’s because you didn’t get what you bargained for, what the demand was for, what you paid taxes for or bought the book for. Instead, you got the bad teacher, or the bad expert. Why? Because we won’t pay more. Why? Because someone, somewhere in the chain, and usually really the entire top half of it, is getting nice budget numbers for their PowerPoint decks by saying “we only need to pay X” and eliding the fact that what they’re doing when they pay X is, basically, scamming the public by taking their money and delivering a fake.

Of course I can hear the public school people freaking out now saying it’s a funding problem, but relax, the “up the chain” people here are the district level admins and union folk and of course the senators and congresspeople who once again are in it for themselves and won’t do the hard work of telling the public the truth.

At some level, the reason our economy is broken, and the reason our teachers suck, and the reason the experts are so wrong, is that we’ve had a moral collapse in our civilization. There’s no more Wilford Brimley voice coming out of people saying “I’m sorry, I’m not going to do that, it wouldn’t be right.” Everyone is willing to compromise to pad their own stats. Everyone is out for themselves. Nobody will pay more to do it the right way.

I can hear all the capitalism free market people here wading in trying to figure out if I’m just a Keynesian or if I’m a full on commie but the thing is, I’m old. I was alive in the ’70s and ’80s. And literally, literally you would hear people who could cut corners on a deal, or advertise and sell an inferior product, say things like “well, I know I could make a lot more money that way, but it just wouldn’t be right.” Or “I could claim to be the best in my yellow pages listing, but that would be dishonest, there are better than me in this city, but I don’t charge quite as much.”

That energy is gone. And that’s the point at which capitalism and free enterprise lose the public.

I don’t know, this is a nonsense rant from a non-economist that will no doubt cause a bunch of people to call me an idiot. But it’s not really about economics. It’s really me saying that once upon a time, people didn’t seize their full advantage because it “wouldn’t be right” and people cared a lot about “doing the right thing” and just as importantly, they knew that the “right thing” was not always the “most profitable” thing. This was lost, I think starting with Regan, to market ideology that says that whatever the market does is inherently right, because Adam Smith is god.

That puts capitalism really on the same footing as communism; the world of men ceases to be a space of moral agency and responsibility and is instead just a place where you throw up your hands and say “I don’t have any choice in the matter, it’s all laws of history!”

I’m here to call bullshit on that. And really what this post is all about is just me reflecting on how stupid it is that the most important things I’ve ever done, that contributed the most to society, were the least well compensated, and as a result society lost my labor (and the labor of many others) doing them. Which is dumb. And no, don’t do the thing I just criticized and say “well if you were all that the market would have rewarded you.” Because YouTube is fucking full of worthless streamers who would improve society by dying, yet who are making absolute bank. The market has no morality.

Humans have that.

Well… had that.

So it’s been a long time since I sat in silence and made a blog post in the middle of the day. Maybe even years. Hard to say.

Thing is, there are so many forces mitigating against posting on your own blog these days. Or at least my own blog. As in:

-

I’m a parent with two teens == busy, busy life

-

Work wants 60+ hours a week from me and mostly gets it

-

Almost any platform you use for anything has some sort of chat, commenting, or reviews that eats what you have to say about many things in life

-

Now there’s also AI, with which you end up getting conversational despite yourself

So you don’t really have time to breathe, and then the things you think about your stuff go on Amazon reviews and the things you think about politics go on X or YouTube, and the questions you have and reflections you have are accidentally pounded out into GPT, or Claude, or Gemini, or OpenClaw on local inference (oh yes, I “have” all of the above) because you’re co-working with these things and then it’s like chatting with a co-worker.

And so, at the end of the day, when you finally get a second, your brain is glazed over and inaccessible on the one hand but that’s sort of inconsequential because on the other hand, it’s basically empty.

I don’t exactly know what’s different about today. I think I’m just getting older and grumpier and some of this stuff is starting to break down because I begin to feel like I (and many others) have been fully “virtualized” and I don’t like that. I think the closer you get to your own mortality, the less you like the idea of “virtual you.”

I mean, dead is dead, but data is certainly more dead than, say, a corpse. A corpse at least lays there for a few years. Your immortal soul, if such exists, is eternal. Your physical possessions are good for decades, or even generations in some cases, as long as your offspring see fit to continue to pass them down.

But virtual stuff? Made of bits? Anyone who has worked in software, and then looked back at the last five years of work and realized that unlike many others they’re not building a “body of work” and in fact the things that they have built usually only live for 3-6 months before zapping back out of existence again, understand that a “virtual you” exists in the same way that ClarisWorks or Netscape or the Metaverse exist, which is to say, not at all.

— § —

Meanwhile, on the point of local inference, the weird thing is that now that I have it set up and fully deployed and working well and robustly configured as a systemctl service pointing to LiteLLM as a fellow systemctl service with watchdogging and dashboarding and blah, blah, blah and calling tools and doing research and writing code, I don’t feel like I want to use it for anything.

I have this weird impulse to maybe just put all of it to bed and go outside and make three-legged stools.

I’d love to say that at least the experience was worth something, as in maybe it’s a business model or a useful skill to go and build people out local inference machines with a bunch of stacked GPU cards on PCI-E X16 in Linux with some sort of repeatable deployment package, only despite claims of “shortages” and “supply chain trouble” over the last couple of years the channel is already absolutely full of purpose-built local inference computers that are effectively the next generation of PCs and that already make my local setup with a big fat case and three GPU cards just to get to 76GB VRAM look pretty ridiculous. Hello, Strix Halo and Mac Studio.

Once upon a time I’d have been excited about all of this but now as a person with student loans that I will not pay off within my lifetime who is in the process of prepping to leave SAVE, it all just seems dumb.

The social contract was never really that great, but now it’s pretty much a scam. And, as time has gone on, we’ve gone to this kind of post-linguistic-turn version of The Matrix in which we’re all farmed, yes, but we’re actually being farmed without compensation or freedom for our words, because it is words that power the economy for the billionaires.

But I digress.

— § —

The other thing worth mentioning is that we’re all lonely out here. Funny thing. I have all these friends in my age group, fellow Generation Xers, who I sometimes talk with.

With a single exception, we are all single, we all don’t/won’t date each other, we all regret just how disconnected and isolated everyone has become, how hard it is to make friends, how hard it is to find significant others, and how easy it is in the age of endless consumer life+self customization to arrive at the point at which you basically can’t really get along with other people as anything other than utilities anyway, in the same way that we are utilities for the billionaires.

If we had any guts, we’d all do what the hippies did and carry out the equivalent of a “turn on, tune in, drop out” move, only we apparently don’t have the guts so we all call our friends and talk about how we don’t have any friends with them and bemoan the fact that there’s no one to date and the fact that you can’t really date anyway because it just makes you hate people and realize how much you’re destined to be alone.

This is not the natural state of things. I don’t know whether it’s unique to Generation X, but I think not. From everything I hear, other generations have their versions of the same thing, even if it’s mostly not identical.

People say that social media and technology and wealth inequality have broken the social contract, but in fact what they have broken is us; the social contract’s failure is collateral damage farther down the line, as what a bunch of broken us voted for.

To fix us we would have to kill off half of tech and most of modern convenience and now of course AI, and lose the activist ethos that has basically destroyed everyone and everything, and just quit and be normal and talk to each other like people.

But fucking what?

Be normal and talk to each other like people?

No fucking way, we’d rather die.

— § —

Such is life in 2026. So instead, we’ll still die, but we’ll just do it alone. We’ll only talk to our friends when it doesn’t matter. We’ll only date people so long as we don’t care about them. We’ll only socialize so long as it’s with strangers, around banal, meme-land topics. And we’ll only vote for candidates we don’t respect.

Because this is America, and because we’re all modern, well-educated people.

I am coming to hate my country. This is not a Democrat thing or a Republican thing; they’re roughly the same. It’s just that we hate each other. We all know that we hate each other. Nobody hates Americans so much as Americans do.

It has become intolerable to live here. Everybody’s busy complaining about Trump right now, but the fact is that we long ago crossed the Rubicon; it’s got nothing to do with immigrants or race, really. You can be white and your neighbors can be white and you can be roughly the same class but you hate each other. You hate each other for existing, and you ultimately harass each other, whether directly or through voting or through rumors.

It’s not pleasant to live here. There’s no community. There’s no comity. There’s just hate. And it’s getting worse.

I’m as American as they come. And I’ve traveled internationally. I have no illusions that I will ever be anything other than an American.

But boy do I hate Americans. And they hate me.

We all hate each other.

Rest of the world, don’t be offended. However much you think we hate you, I can assure you that we hate each other more.

Fall was five minutes ago it seems, and yet it’s centuries later. The change in the world at large is obvious to everyone, and the change my small world is just as transformative.

-

I moved wholesale from Mac OS back to Linux

-

I rebuilt my main machine to 76GB VRAM 3x GPU 128GB RAM Core i9

-

I’ve got 3x Thinkcentre m93p minis running NAS and AI agents, all new

-

My job has become about 75% managing AIs

-

And the benefits provider has changed, which transforms 401ks and health and vision and dental and all of that

-

My whole photo workflow has moved from Lightroom to DigiKam + Darktable

-

Dog is old enough now that I’ve returned to working in the home office for the first time in two years

-

Kids are so teenage now that I rarely see them even when they’re here

-

Other switches include: web host 20i -> 101, password manager Lastpass -> 1Password, notes database DevonThink+Joplin -> Obsidian, even boots Blundstone -> Redback

I know all of this will sound minor or obscure to people but the point is: in terms of the minute-by-minute and the weekend-by-weekend, very few things in my life bear any similarity to what they looked like six months ago, and if you go back three years, almost nothing overlaps at all. You never see the rebirth coming until it’s already done.

It is such a wild moment. In some ways, everything old is new again—I was an OG Linux evangelist from 1993 to 2009, and I used to be the guy always on the bleeding edge, and I used to be hosted at 101 from like 2003 to 2023, and I used to wear Redbacks… So there is this weird sense of retro me and/or revival—and yet it’s mixed in with all kinds of crazy new, like father of driving-age teenagers and coding LLM models running on my own metal, which is now in a truly massive case to hold the massive vGPU cards.

This is just a post to placehold an inflection point, in case I wonder about it later.

It was here, and it felt wild, especially with what’s going on in the wider world right now. Who’s unmoored? I’m unmoored!

So that was several posts in a row where I was Eurekaing about figuring out how to finally get ROCm stable, shortly after which… it would break again without warning.

The thing about ROCm that I have now fully internalized is that I don’t understand it, and it may be made in such a way that, or relying on things that, I simply don’t understand. It has periods of meta-stability.

You’ll hunt for new things, new SSDT files, new memory mappings, new kernel parameters, build options, versions, firmware files, etc. And right after a change, it will work!

“AHA!” you say, “I have found the magic thing I was missing. It’s smooth sailing from here on out!”

And it will work all day. Or maybe all week. You might push a couple million tokens through it. And then suddenly, it crashes. And once it has crashed, thereafter it will immediately crash again every time you start it. You’ll swear and wonder why. You’ll be frantic.

“This was just working. I used it all week! What’s changed?! If it was just random crashes that would be one thing, but we went from rock solid all week to it crashes every single start before first turn and I didn’t change a thing!”

You’ll reboot, clear CMOS, do card resets with rocm-smi… and eventually, though it doesn’t make any sense, start hunting for that next “tweak” that must actually be the one that you need.

And hours of searching and experimenting later, you’ll suddenly “find” one that enables you to start again. And not only does it start, but it’s stable! I can ask it to do a task of 50 tool calls and it just rolls! Huzzah!”

Only no. In a day, or a week, it’ll suddenly stop and be unstartable again.

No more, I’m done.

— § —

Previously Vulkan had been rock sold every time, completely no drama, but I’d hesitated to use it because it was about 50% slower than ROCm, which seemed like too much to trade away… better to keep trying until I “figured ROCm out.”

But then I found someone who suggested removing the display card from the devices stack for Vulkan, and when I did… Boom. Not a 50% difference, but about a 5% difference. For no drama. And about 80% less power usage.

I’m sold. I’m Vulkan now on the two v620s and Qwen 3.5 35B A3B q8 (Bartowski). On the RX 6700XT, I’ll throw like a text and a visual embedding model maybe. Simple tools that will be of use.

— § —

Meanwhile, also worth keeping track of: every single Qwen 3.5 template out there is broken for llama.cpp because, it turns out, with Qwen the expectation is that the template does a bunch of fancy things to clean up, but llama.cpp doesn’t support Jinja, only a subset called Minja because until 5 minutes ago that sort of “eh whatevs we’ll do it in template) logic wasn’t really considered viable.

That creates a problem: all the templates are for jinja, but I needed a template for minja (in llama.cpp) so that we could get tool use working properly.

So I had Claude Opus review a bunch of the existing community jinja templates and then pick one to refactor, to the extent possible, for minja.

Here you go: template.jinja

Key and somewhat vexing note, for this to work properly, you also need to to into src/llama-grammar.cpp and change the value of MAX_REPETITION_THRESHOLD from 2,000 to:

#define MAX_REPETITION_THRESHOLD 200000

Because OpenClaw uses like six million parameters for some of its tools and that explodes the grammar enforcer (I still don’t fully understand whether this is a part of minja or not by convention), so once you have a template that actually works instead of breaks, it will seem to be a template that breaks instead of works until you recompile with that constant defined.

— § —

I started all of this nearly two months ago. It’s taken a long time, but we’re finally feeling like we’re getting to the point where I can start to build soon, rather than just be playing with toys.

One day after posting that I thought I had the problem solved, more was needed. Turns out that Linux was not honoring the mainboard BIOS’s aperture setting was was leading to stability on runs, crashes on others.

So we also added:

amdgpu.gartsize=2048

I have the nagging feeling that even this may not kill it once and for all, and there is every chance that in the end, we will have to drop back to Vulkan.

But for now, we seem to be golden, with Q6 weights and 128k Q8 context cache doing 42-46 tokens/second.

So I made a post a couple days ago about “the monster” but following that post, a few things had become clear:

-

ROCm was unstable, maybe even very unstable

-

Vulkan was much slower, about half as fast, but far more stable

-

I didn’t really know what I was doing (okay, I knew this going in)

I was happy to know that there was a path to booting into Mac OS without having to tear the system apart again, and that it only required editing and recompiling Radeon driver kexts 😛 but meanwhile, inference was crashing more often than I’d like. Sometimes very often. I won’t even tell you all of the things I’ve tried, but among the many tactics tried to identify the error and/or solve it, I tried:

-

Multiple models, at different quants, and from different providers

-

Multiple versions of ROCm, including 6.2, 6.3, 6.3.4, 6.4, 6.4.4, 7.0, 7.1.1, and 7.2

-

Multiple versions and forks of llama.cpp

-

Aphrodite and vLLM and even for a minute MLCLLM

-

Rearranging card order

-

An entire library of environment variables, llama command line options, and kernel command line arguments

-

Excluding the RX 6700XT card as the odd-man-out and just running the v620s in tandem

-

Many different context sizes and quantizations

Sometimes I would think I had it fixed because it had been 5 or maybe even 10 turns without a crash on ROCm!

…and then it would crash on turn 6 or 11.

Some things worked better than others. For example, llama.cpp pre-b8353 with a Q8 model from Bartowski was much more stable than the Q8 from Unsloth or llama-current. And running it on ROCm 6.2 or 6.3 was more stable than running it on ROCm 7.1.1 or 7.2. Also, using the software SMU via kernel parameter seemed to help some things. But we’d always end up in the same place: ROCm crash, back to Vulkan.

I must have decided to “just use Vulkan” 100 times, but the thing is, when ROCm is giving you 45-50 tokens/second, it’s hard to settle for 21-25 even if it’s stable. And stable it was…throughout it all, Vulkan never crashed.

— § —

The two key problems were as far as I could tell:

-

PCIe bus errors which I had sort of grumblingly admitted were just the result of trying to run data center hardware on consumer mainboards

-

Page faults that were by far the most common thing to take down llama when using an ROCm build of it

The solutions in my case were different for each of the three items above.

1. PCIe Bus Errors

It turns out that the, um, less expensive (though very highly reviewed) Montech Century 1200w power supply I’d acquired from Amazon left something to be desired. I’d been so wrapped up in the exotic-lack-of-understanding that I felt around LLMs, and the “it’s not going to be quite right, it’s not consumer PC hardware” mentality that I hadn’t been monitoring voltage. When I did, I found that this PSU, which claimed to have 100A on the 12V rail, was running at 11.5V-11.6V at idle when the cards were, according to rocm-smi, only drawing about 5 watts each.

It should not be a surprise to anyone that when we started to push into load, this decreased further.

I’ve replaced that unit with a Corsair unit and now we’re at around 12.05V at idle and around 11.9V under full load. I can live with that. And the PCIe bus errors have gone away.

The lesson here is that “gamer power supplies” rated at 1200W are mostly marketing. They don’t really expect you to draw the 1200W, they expect you to run one really fat graphics card and want the “1200” number to be showing through the clear sides of your gamer case so that people can be impressed. When you actually try to pull 80-90 amps, they just can’t do it.

I keep having to re-learn this lesson build after build… Don’t try to get off cheaply on the power supply. Especially if you’re going to be running 3x RDNA2 cards, including two server cards that want 25 amps each on 12V. Be sure also, for those of you that are new to this, not to put both PCIe power connectors on a fat card like this on the same cable. Run two cables from the PSU or you’ll fry your PSU-side connector on the single cable.

2. (THE BIGGIE) Page faults

These were the bane of my existence, and I’ve spent multiple nights this week up until the wee hours trying to find *anything* that would reduce the “crashiness” of ROCm. So . many . environment . variables. And llama arguments. And kernel arguments. And new builds of ROCm, of llama.cpp, and of half the libraries that they rely on.

The solution: credit where it’s due, I stumbled across this guy’s posts: https://medium.com/@agentz/how-to-fix-rocm-pytorch-memory-faults-on-amd-gpus-segmentation-fault-page-not-present-544b9f62f627

I almost didn’t try this suggestion that he makes:

ttm.pages_limit=25165824

It looks sketch and random and not really related to amdgpu or amdgpu-dkms or really even anything related to anything that I’d been working on. But I decided to look up what it did and once I did, it made a sort of sense, so I tried it. About three hours ago now. After battling this all week.

And voila… no more crashes. At least not yet. And we’re running inference on the fast Lemonade version of llama.cpp that is based on its own fork of ROCm and is pretty exotic. Previously this would page fault on the first turn. Maybe the second. But we’ve been up for several hours now and all appears well.

The above increases the size of the address space that the kernel is allowed to open up. I don’t know what the default is, but at this point, I believe I know that it’s too small for 76GB VRAM + 128GB DRAM, which is what I’ve been trying to make work.

Note that there is also:

ttm.page_pool_size

This pre-allocates the GTT address space in case you expect memory pressure. I’m not using it right now, but if I was planning to run a really big model, I’d probably set both of these values. Right now I’ve just got ttm.pages_limit set to 30000000, which is just a nudge up from where he had it.

— § —

The amazing thing is that in a week of talking to every frontier hyperscaler LLM (Claude, ChatGPT, Gemini, etc.) and extensive Googling, I didn’t step across anyone discussing ttm.* parameters in relation to ROCm. Until tonight.

I think maybe this doesn’t come up all that often since it’s basically going to impact people who:

-

Are using multiple AMD GPUs on a consumer system

-

In a configuration (i.e. v620s) where you can end up with quite a bit of VRAM (more than triple the typical just 8-24GB)

But many thanks to AgentZ on Medium and hopefully this helps someone else that’s banging their head against a wall.

And, just in case any of them matter (I am *not* messing about with this now that we have ROCm whirrled up and stable), here are my kernel command line args:

pci=realloc=off amdgpu.gpu_recovery=1 amdgpu.mcbp=0 amd_iommu=off intel_iommu=off pcie_aspm=off pcie_port_pm=off amdgpu.swSMU=1 amdgpu.cswr_enable=0 ttm.pages_limit=25165824

With this I am running 2x Radeon Pro v620s and 1x RX 6700XT (total 76GB VRAM, 3x Radeon RDNA2 GPUs) and, finally, they appear to be… stable.

— § —

Important addendum: https://leapdragon.net/2026/03/23/more-was-needed/

It started as one of those weekends that you want back. Everyone’s been sick since Friday, O— worst of all. Sounds terrible, feels terrible, has hardly come out of his room, isn’t eating. M— started to feel rotten, too. And the same for dad.

And I have been trying to wrap up the first stage of the technology “rebuild” and return to the “user” role. There was a time when I wanted to hack all the time just to say I was hacking but that ended, like, 20 years ago. Now I sort of want to get things done.

The first hurdle in all of this was just getting three graphics cars into a case (two of them exceptionally long) and powered and cooled (two of them are designed for directional air server farms and don’t have active cooling). Then the next hurdle was getting reliable, performance inference going with a model I liked. That turned out to be Qwen 3.5, though not without hiccups.

Then I got stuck on the next two things:

-

Plug OpenClaw into the local inference

-

Get Mac OS booting again (that’s right, dual-booting a Hackintosh with wild hardware)

Both of these things needed to be done, and both were non-trivial tasks. So to make my weekend worse, on top of everyone feeling various degrees of sick, Friday night and yesterday I spent most of my time feeling lousy and alternating between sleep and banging on these two problems.

— § —

I must have spent six hours yesterday on the Mac problem alone, and I have no idea how many times I was like:

<press reset>

<select Linux>

<log in>

$ mkdir /tmp/EFI

$ sudo mount -t auto /dev/sdc1 /tmp/EFI

<enter password>

$ nano /tmp/EFI/EFI/OC/config.plist

Eventually I got frustrated enough to enlist the help of Claude (my own agent being down, more on this momentarily) to scour the web looking for answers. All kinds of wild stuff was tried in the OpenCore plist and with ACPI tables and so on. No matter what, it seemed like there was a hard hang around PCI enumeration, and I presumed the two v620s with their massive footprints in address space were the cause. Into late last night, between Claude and I, we just couldn’t get past PCI enumeration and mapping.

Claude was telling me that it was time to give up, it just wasn’t on the cards, the Mac OS IOPCI stuff couldn’t cope with what I was trying to do, that Mac OS had never been intended to work with. We had spent hours trying to turn off PCIe slots, build SSDTs, directly patch memory with OpenCore shells scripts on bootup, build .efi modules to rearrange PCI windows, but nothing seemed to work, and in fact nothing ever really seemed to change.

— § —

In the wee hours I pivoted over to OpenClaw, where I’d been having enough trouble with Qwen that I’d started downloading and trying instead, always to disappointment. The thing is, Qwen 3.5 35B A3B is just really good in certain ways. It’s blazing fast. It’s really smart. And it was perfect on my 76GB of VRAM (more on this in a bit) in that I could fit like an Unsloth dynamic q6_XL on it and have room for a massive context window at q8. It’s local inference that doesn’t feel like local inference.

Only, the other thing is, turns and tool calling were completely broken. Like, completely. It had been hard enough to get the model up and running—all kinds of errors, apparent incompatibilities, updates… and then we ended up in a state in which it would often lose the ability to call tools altogether, and would just pitifully announce it was going to do so, then do nothing, then apologize profusely when asked, then announce it was going to succeed this time, then do nothing, then say it couldn’t understand what was happening.

Of course, being new to running my own LLM, I was leaning heavily on LLMs to help me through, and they were coming up with all kinds of ideas and all kinds of opinions about Openclaw. We must have tried 10 different versions of Openclaw and 100 different (often hallucinated) configuration options for openclaw.json. We also tried vLLM (don’t bother with RDNA2, the tensor parallelism really requires better card-to-card links than PCIe, something that’s just not in the architecture, plus it really fights with just about every version of ROCm), Aphrodite, Ollama, LM Studio, a bunch of everyone’s hand-written proxies, and about six different front-ends for chat.

Nothing had worked, and I was very tired of complaining at LLMs while they complained at me as we tried to get my own LLM to behave with my own agent.

Then, as is so often the case, a less direct search somehow opened up the channels of serendipity enough to make a massive difference. I searched Google for “local models similar to Qwen 3.5 35b but with more stable tool calling” and then, without warning, Gemini opened up with all of the answers that it had previously hidden from me in our hours of chatting and banging on openclaw.json and template.jinja files. It suddenly just rattled off a list:

-

Qwen 3.5 MoE models mix tool calling outputs into <think> phases, which nothing on earth can handle right now

-

Qwen 3.5 is also a bit of a wild child as you increase temperature and response length (which I have liked), but that wildness includes its approach to JSON and XML

-

Llama.cpp and Openclaw both have aggressive timeouts

-

All together this means turns that platforms like Openclaw misinterpret and may even miss, leaving turns or entire sessions in undefined states

-

Solution: Set 20 minute timeouts everywhere, turn of Qwen’s thinking mode at the inference level, and reduce temperature from 1+ to 0.7 or less

I implemented these fixes in about five minutes in the middle of the night, and voila, Qwen 3.5 35B A3B was suddenly a rock solid tool user in Openclaw. Like WTF. But also, in a good way.

Well, obviously what I did is put it to work on my entire chat summaries with Claude and GPT working on the Hackintosh problem and ask it to build me a library of findings about each area of research, as well as a final THEORY.md outlining its own theory, based on all the research, of just what was breaking in Mac OS so that we could try to fix it, since Claude and GPT were out of ideas.

— § —

So today, I came back in the morning, and boom. Nestled in the guts of THEORY.md was the point that just because the hang was around where Mac OS was spitting out that it was initializing PCI, that did not mean that this was causing the hang, and since my BIOS version (already checked) was good and my BIOS settings were right and the config.plist had been tested a million times, and all the effort spend trying to get the card to disappear for Mac OS in one way or another had not worked, it seemed likely that it was a kext, not the kernel, that was causing a hang, and the proximity of this hang to output about PCI enumeration had fooled us all.

And, at that moment, it all fell into place. I had opted for a display card with the same architecture ad the two Radeon Pro v620 units, with the idea that we could get another 12GB that way for inference. But what it also meant was that NootRX (the kext that enables certain Navi-baed hardware) might be choking on the cards in kextland, rather than this being about BARs and address space, etc.

Pulled the NootRX source and that was the answer. NootRX grabs one Navi card, whichever is the first it finds on the bus, and tries to use it for display.

It was likely grabbing a v620 first and we might not even be hung at all, there just aren’t any display outputs on a v620. And since we had the source, we had the power to fix the kext…

— § —

So anyway, tonight is here, and:

-

Yes, inference is up and running

-

Yes, Openclaw is up and running on it, including solid, stable, tool use and long projects

-

Yes, Mac OS and Linux are dual booting again on this now wilder-ass PC with 76GB VRAM, 128GB DRAM, 3 RDNA2 GPUs, and 16 hardware CPU threads

-

I can move on

Point being, the weekend ended on a high note, and I’m that much closer to re-architecting my computing and data life.

— § —

Next up:

-

OwnCloud and a pile of non-HFS+ storage on a dedicated host

-

Plus migrate a bunch of data off of the seemingly infinite universe of HFS+ I have here

It’s happening. Local inference and a future trajectory that is on Linux once again. Meanwhile, it’s late at night (or even wee hours) with the work week ahead. Not even sure this is coherent. So… end.

Leapdragon »

Other Places »

My Favorites »

§ As you get older, the ghosts become more real than anything else.

§ Under the leaves, soil. Under the soil, stone. Under the stone, souls.

§ Radically empowering individuals in society may be the worst mistake we ever made.

§ Want to be a radical? Refuse to suffer. Then, wait for the assault.

§ Goodbye 2017, part two. (The real part.)

§ Sometimes you find home where you’ve never been—and you dwell where you aren’t.

§ The self can’t play Atlas for postmodernity because science is now supernatural.

§ Rehab is universal. So is history.

§ Identity, transcendence, and tactics.

§ Untitled. (a.k.a. Pretty faces, new old photos.)

Regular Reading »

Archives »

April 2026

March 2026

February 2026

January 2026

December 2025

July 2025

May 2025

April 2025

February 2025

January 2025

December 2024

October 2024

September 2024

August 2024

July 2024

June 2024

May 2024

April 2024

March 2024

February 2024

January 2024

December 2023

November 2023

October 2023

September 2023

May 2023

April 2023

March 2023

January 2023

December 2022

November 2022

August 2022

June 2022

May 2022

April 2022

March 2022

January 2022

December 2021

November 2021

September 2021

April 2021

March 2021

February 2021

January 2021

December 2020

November 2020

October 2020

September 2020

August 2020

July 2020

June 2020

May 2020

April 2020

March 2020

February 2020

January 2020

December 2019

November 2019

October 2019

September 2019

August 2019

July 2019

May 2019

April 2019

March 2019

February 2019

January 2019

December 2018

November 2018

October 2018

September 2018

August 2018

July 2018

June 2018

May 2018

April 2018

March 2018

February 2018

January 2018

December 2017

November 2017

October 2017

September 2017

August 2017

July 2017

June 2017

May 2017

April 2017

March 2017

February 2017

January 2017

December 2016

November 2016

October 2016

September 2016

August 2016

July 2016

June 2016

May 2016

April 2016

March 2016

February 2016

January 2016

December 2015

June 2015

February 2015

January 2015

December 2014

October 2014

September 2014

August 2014

July 2014

June 2014

May 2014

April 2014

March 2014

February 2014

January 2014

December 2013

November 2013

September 2013

August 2013

July 2013

June 2013

May 2013

April 2013

March 2013

December 2012

November 2012

October 2012

August 2012

July 2012

June 2012

May 2012

March 2012

December 2011

October 2011

September 2011

August 2011

July 2011

June 2011

May 2011

April 2011

March 2011

February 2011

December 2010

November 2010

October 2010

September 2010

August 2010

July 2010

June 2010

May 2010

April 2010

March 2010

February 2010

January 2010

December 2009

November 2009

October 2009

September 2009

August 2009

July 2009

June 2009

May 2009

April 2009

March 2009

February 2009

January 2009

December 2008

November 2008

October 2008

September 2008

August 2008

July 2008

June 2008

May 2008

April 2008

March 2008

February 2008

January 2008

December 2007

November 2007

October 2007

September 2007

August 2007

July 2007

June 2007

May 2007

April 2007

March 2007

February 2007

January 2007

December 2006

November 2006

October 2006

September 2006

August 2006

July 2006

June 2006

May 2006

April 2006

March 2006

February 2006

January 2006

December 2005

November 2005

October 2005

September 2005

August 2005

July 2005

June 2005

May 2005

April 2005

March 2005

February 2005

January 2005

December 2004

August 2004

July 2004

June 2004

May 2004

April 2004

March 2004

February 2004

January 2004

December 2003

November 2003

October 2003

September 2003

August 2003

July 2003

June 2003

April 2003

March 2003

February 2003

January 2003

December 2002

November 2002

October 2002

September 2002

August 2002

May 2002

April 2002

March 2002

February 2002

January 2002

December 2001

November 2001

October 2001

September 2001

July 2001

June 2001

May 2001

April 2001

March 2001

February 2001

January 2001

December 2000

November 2000

October 2000

September 2000

August 2000

July 2000

June 2000

May 2000

April 2000

March 2000

February 2000

January 2000

December 1999

November 1999